Sensor fusion has become a defining capability in modern intelligent devices, from autonomous vehicles and robotics to drones, wearables, and industrial automation. By blending data from multiple sensors such as IMUs, LiDAR, radar, cameras, magnetometers, and ultrasonic sensors, sensor fusion creates a more accurate and reliable understanding of the environment.

Instead of relying on a single imperfect data source, the system merges multiple streams to reduce uncertainty, improve precision, and deliver higher confidence in perception and decision-making.

Why Sensor Fusion Matters

Embedded devices often operate under strict constraints, limited power budgets, small footprints, and low computational resources. Yet, despite these limits, they must perform complex tasks such as motion tracking, localization, object detection, and real-time control.

Single sensors tend to have weaknesses:

- Cameras struggle in low light.

- IMUs drift over time.

- LiDAR and radar lack texture or color context.

- GPS becomes unreliable indoors.

Sensor fusion combines strengths and compensates for weaknesses, helping systems stay reliable across environments.

When implemented through thoughtful embedded system design, fusion enables better performance in real-time applications like path planning, stabilization, and obstacle avoidance.

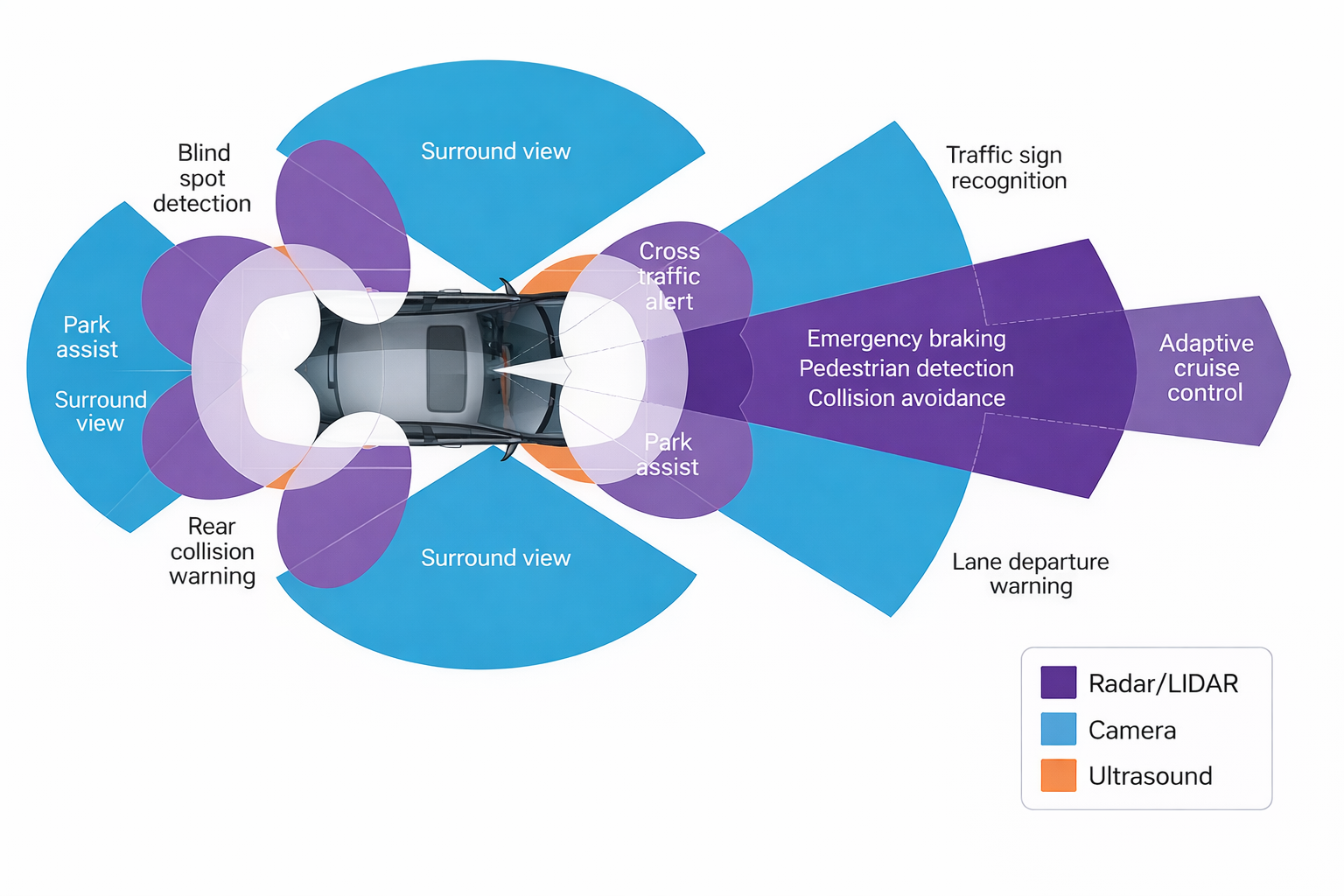

Visual Overview of Sensor Fusion

To better understand how multiple sensors work together, check out this infographic illustrating a multi-sensor fusion setup for embedded systems:

Multi-sensor Fusion for Robust Device Autonomy

It shows how IMUs, cameras, LiDAR, and other sensors complement each other to provide a unified perception of the environment. By visualizing how raw sensor data flows through filtering, calibration, and fusion algorithms, readers can grasp the practical implementation of the concepts as discussed below.

Common Sensor Fusion Approach

Different applications demand different fusion methodologies. Popular approaches include:

1. Filter-Based (Model-Driven)

- Kalman Filter (KF)

- Extended Kalman Filter (EKF)

- Unscented Kalman Filter (UKF)

These methods are efficient for real-time control and widely used in robotics, drones, and automotive motion control.

2. Bayesian and Probabilistic Methods

Particle filtering and probabilistic graphical approaches handle uncertainty more flexibly, especially when dealing with non-linear or unstructured sensor noise.

3. Machine-Learning-Based Fusion

Deep learning models combine LiDAR-camera or IMU-camera data for:

- Scene segmentation

- Object detection

- SLAM

These are becoming common in autonomous vehicles and advanced robotics.

4. Hybrid Systems

Many modern platforms combine model-driven control with learning-based perception for the best balance of accuracy and predictability.

Calibration, Synchronization, and Preprocessing: The Foundation of Fusion

Even the best fusion algorithms can fail if the inputs aren’t aligned correctly. Three foundational steps ensure stability:

- Time Synchronization: All sensor streams must match timestamps accurately.

- Spatial Calibration: Physical alignment between sensors (e.g., LiDAR + camera) is critical.

- Pre-Processing: Filtering, denoising, edge detection, map alignment, and motion compensation improve downstream reliability.

Addressing these early ensures smoother workflows when designing embedded system solutions that depend on real-time perception.

Architecting Fusion for Resource-Constrained Devices

Real-world embedded platforms require intelligent partitioning:

- IMU integration and low-latency filters run on real-time CPUs

- Vision and deep-learning processing run on GPUs, NPUs, or accelerators

- FPGA or DSP offload deterministic compute and signal conditioning

This balance requires experience, planning, and an advanced design solution mindset that considers performance, cost, and longevity early in development.

Handling Failures and Improving Robustness

Sensor fusion must remain reliable even if some sensors malfunction or environmental conditions shift.

Best practices include:

- Confidence scoring and decision weighting

- Sensor redundancy

- Error prediction and anomaly detection

- Fallback operational modes

This resilience should be built into the product lifecycle, especially when working with an embedded system company focused on long-term deployment and scalability.

Example Use Case: IMU and Camera Fusion

One of the most commonly deployed fusion strategies pairs:

- High-frequency IMU data (for fast response and orientation updates)

- Lower-rate visual feedback (for drift correction and feature tracking)

This is used in AR/VR headsets, drones, smartphones, and autonomous robots. The IMU predicts motion quickly; the camera refines accuracy when imagery is available.

Over time, refining this approach involves iterative testing, performance tuning, and continuous optimization, a key part of good embedded system design practice.

Testing and Validation

To ensure reliability, teams must validate using:

- Hardware-in-the-loop (HIL) setups

- Software simulation and synthetic replay

- Environmental stress testing

- Post-silicon validation

Real-world testing reveals edge cases like weather variations, low-light scenarios, vibration, or electromagnetic interference that simulations alone cannot capture.

This validation stage is often where partnering with an experienced embedded system company becomes essential, especially when scaling from prototype to production.

Partnering for Efficient Development

Bringing everything together, such as correct algorithms, hardware mapping, calibration, safety logic, and iterative testing, requires time, expertise, and planning. For many engineering teams, working with a partner offering end-to-end support is an advanced design solution that accelerates development while reducing risk.

And yes, effectively designing embedded system architecture with sensor fusion is not a single-phase activity. It expands across design, optimization, deployment, field validation, and post-launch management.

Sensor Fusion in Autonomous Vehicles: The Role of Embedded Systems

Tessolve: Your Engineering Partner in Sensor-Driven Innovation

At Tessolve, we help turn sensor-heavy concepts into reliable, production-ready embedded systems. With deep expertise in system design, board development, embedded software, post-silicon validation, testing frameworks, and product qualification, we support the entire development lifecycle.

Our engineering teams specialize in advanced perception solutions, including IMU-camera fusion, LiDAR-based object tracking, multi-sensor SLAM, and hardware-accelerated computational pipelines. We also offer system-level validation environments, HIL testbeds, and scalable test automation that ensure performance and reliability across environments and product stages.

Whether you are building next-generation autonomous platforms, industrial sensing systems, or high-precision embedded intelligence, Tessolve provides the expertise, infrastructure, and engineering confidence to help you go from concept to market faster and with fewer risks.

Frequently Asked Questions (FAQs)

1. What is sensor fusion in embedded systems?

Sensor fusion combines data from multiple sensors to improve accuracy, reliability, and decision-making in embedded devices.

2. Why is sensor fusion important for robotics and autonomous systems?

It enhances perception, reduces sensor errors, improves localization, and ensures safer, more precise control in real-world conditions.

3. Which algorithms are commonly used for sensor fusion?

Kalman filters, particle filters, Bayesian methods, and machine-learning models are widely used depending on latency and accuracy needs.

4. How do calibration and synchronization affect sensor fusion performance?

Proper calibration and time alignment reduce errors, ensure consistent data fusion, and prevent drift in state estimation.