Augmented and virtual reality are no longer futuristic concepts; they’re powering industrial training, remote surgeries, assisted manufacturing, digital twins, and immersive entertainment experiences. But even the most visually stunning AR/VR system falls apart if there’s latency. Human perception is extremely sensitive to motion-to-photon delay, and even a 15–20 ms gap can break immersion, induce nausea, or make interactions feel sluggish.

That’s why ultra-low-latency engineering at the edge, especially across AR/VR ecosystems, has become a defining challenge in next-generation experience design.

Why Edge Processing and Embedded Systems Matter

Latency in AR/VR builds up in several pipeline stages:

- Sensor capture

- Pose tracking

- Rendering

- Encoding/decoding

- Network roundtrip

- Display output

Running compute closer to the user, at the device level or on nearby edge servers, eliminates long-distance cloud travel time. Networks like Wi-Fi 6E and private 5G also reduce jitter and round-trip delay, enabling real-time responsiveness.

Edge computing isn’t optional for realistic AR/VR; it’s what makes immersion believable.

Breaking Down the Low-Latency Architecture

1. Sensor and Tracking Pipeline

High-frequency IMUs, eye-tracking modules, and optical depth sensors are the first latency gate. Fast, accurate tracking enables techniques like foveated rendering, where only the user’s focus area is rendered at high resolution.

But this only works if tracking is extremely low latency; otherwise, visuals lag behind eye movement.

These methods are efficient for real-time control and widely used in robotics, drones, and automotive motion control.

2. Bayesian and Probabilistic Methods

Particle filtering and probabilistic graphical approaches handle uncertainty more flexibly, especially when dealing with non-linear or unstructured sensor noise.

- Neural inference (pose prediction)

- Reprojection

- Video encoding/decoding

- SLAM computation

FPGAs are particularly useful in prototyping and scaling computationally heavy vision workloads while maintaining determinism.

3. Predictive Rendering and Reprojection

Motion prediction renders frames before the user actually moves, and reprojection corrects inaccuracies in real time.

Prediction buys back milliseconds, something invaluable when every millisecond counts.

4. Network Optimization

Choosing low-latency transport protocols and prioritizing real-time data streams ensures users don’t feel delays. Network slicing and real-time QoS rules help stabilize experience on wireless links.

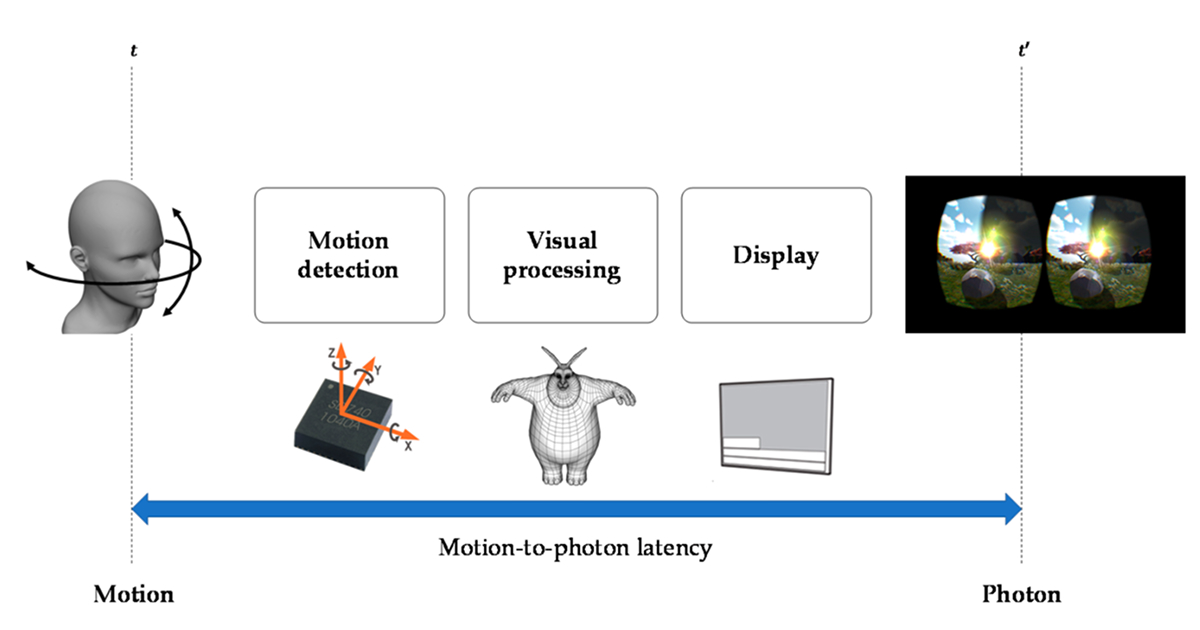

Understanding Motion-to-Photon Latency

To better visualize where delays occur in an AR/VR system, here’s a widely referenced infographic that breaks down the motion-to-photon pipeline and explains how latency accumulates through sensing, tracking, rendering, and display stages:

Motion-to-Photon Latency Breakdown (VR/AR Pipeline)

This visual helps make one key point clear: latency isn’t caused by a single subsystem, but by many micro-delays spread across firmware, hardware accelerators, sensors, network paths, and rendering workloads.

Most engineering strategies discussed in this blog, such as foveated rendering, prediction models, heterogeneous compute, and edge acceleration, are attempts to compress these stages and maintain responsiveness under sub-20 ms latency windows.

Practical AR/VR System Patterns

| Architecture Style | How It Helps Latency |

| Local-first compute with edge assist | Keeps AR/VR usable even if the network temporarily degrades |

| Foveated and multi-rate video streaming | Reduces bandwidth and compute while maintaining visual realism |

| Heterogeneous compute | Assigns tasks to CPU, GPU, and FPGA based on latency sensitivity |

Optimization Checklist for Engineers

If you’re aiming for immersive responsiveness, prioritize:

- Reducing pipeline buffers

- Measuring motion-to-photon latency, not just FPS

- Hardware-accelerated codecs

- Thermal and power-aware scheduling

- Edge-assisted predictive rendering

Latency reduction is often incremental, but every step compounds into user-perceived realism.

Role of Embedded Engineering in AR/VR Latency

This is where embedded system design becomes crucial. Ultra-low-latency experiences demand tight synergy between hardware accelerators, firmware timings, and OS scheduling. Teams applying embedded system design know that microseconds matter, and fine-tuning drivers, DMA pathways, RTOS timers, and sensor synchronization is often the difference between usable and exceptional.

Similarly, when designing embedded system components like sensor fusion pipelines and ASIC-based acceleration modules, verification should include both technical performance and perception-based user testing. Engineers who are designing embedded system solutions for AR/VR must validate under edge-network conditions rather than only lab setups.

Tools and Building Blocks That Matter

- Real-time kernels and prioritization-aware schedulers

- ML accelerators and optimized inference runtimes

- Low-latency codec pipelines (OpenXR, foveated video, L4 encoding modes)

- FPGA accelerators for deterministic real-time computations

These technologies work best when integrated through an advanced design solution strategy rather than loosely combined components. A well-planned architecture ensures efficiency, and an advanced design solution approach reduces redesign cycles later.

Why Working With Specialists Helps

AR/VR edge systems require multidisciplinary capability: RF, embedded firmware, computer vision, silicon validation, and system integration. Partnering with an experienced embedded system company speeds up development, reduces compatibility risks, and ensures the design meets its performance targets.

A trusted embedded system company brings hardware bring-up, firmware tuning, accelerator design, and measurement infrastructure, essential for pushing latency toward single-digit performance.

Trends in Embedded AI: Designing Hardware for Machine Learning on the Edge

Tessolve Advantage: Engineering AR/VR Performance from Silicon to System

At Tessolve, we specialize in building high-performance AR/VR and edge compute solutions by combining system engineering, embedded development, and silicon validation expertise into one workflow. Our engineering teams support everything from FPGA prototyping, ASIC/SoC bring-up, firmware optimization, and edge acceleration pipelines to post-silicon validation and testing.

We help product teams reduce motion-to-photon delay, accelerate vision pipelines, and validate performance under real 5G and Wi-Fi conditions. Whether you require hardware optimization, edge-assisted rendering strategies, or hybrid computing architectures, Tessolve provides end-to-end expertise to build scalable AR/VR platforms ready for field deployment.

If you’re building next-generation immersive systems and need support across architecture, verification, firmware, edge optimization, or acceleration engineering, Tessolve is ready to collaborate and help turn your ideas into market-ready products.

Frequently Asked Questions (FAQs)

1. Why is low latency so important in AR/VR systems?

Low latency ensures realistic interaction, prevents motion sickness, improves tracking accuracy, and creates a smooth immersive experience for the user.

2. What is motion-to-photon latency in AR/VR?

Motion-to-photon latency is the delay between a user’s physical movement and the updated visual appearing in the headset.

3. How does edge computing reduce latency in AR/VR applications?

Edge computing processes data closer to the user, eliminating long cloud round-trip delays and improving real-time responsiveness.

4. Can embedded hardware acceleration improve AR/VR performance?

Yes, accelerators like GPUs, DSPs, and FPGAs execute time-critical tasks faster, significantly lowering processing delays and improving responsiveness.