Virtualization has steadily moved from servers into the embedded world, becoming a powerful technique for consolidating functions, improving reliability, and extracting maximum performance from multi-core System on Chips (SoCs). As hardware becomes increasingly capable, engineering teams are exploring virtualization to separate workloads, boost determinism, and optimize resource usage, all while meeting strict safety and performance targets.

This blog walks through how embedded virtualization works on multi-core SoCs, the design decisions that impact performance, and practical considerations engineers should keep in mind. Whether you are building automotive ECUs, industrial controllers, edge devices, or advanced consumer electronics, virtualization is a core part of modern embedded system design.

Why Virtualization Matters in Embedded Systems

Embedded virtualization focuses on isolating mixed-criticality workloads and maintaining real-time behavior. Benefits include:

- Reducing BOM by consolidating hardware

- Improving safety and fault containment

- Ensuring real-time tasks get guaranteed resources

- Running Linux, RTOS, and bare-metal code together without interference

A lightweight Type-1 hypervisor or partitioning hypervisor ensures each workload has its own partition, with dedicated cores, memory, and I/O, making the system predictable and maintainable.

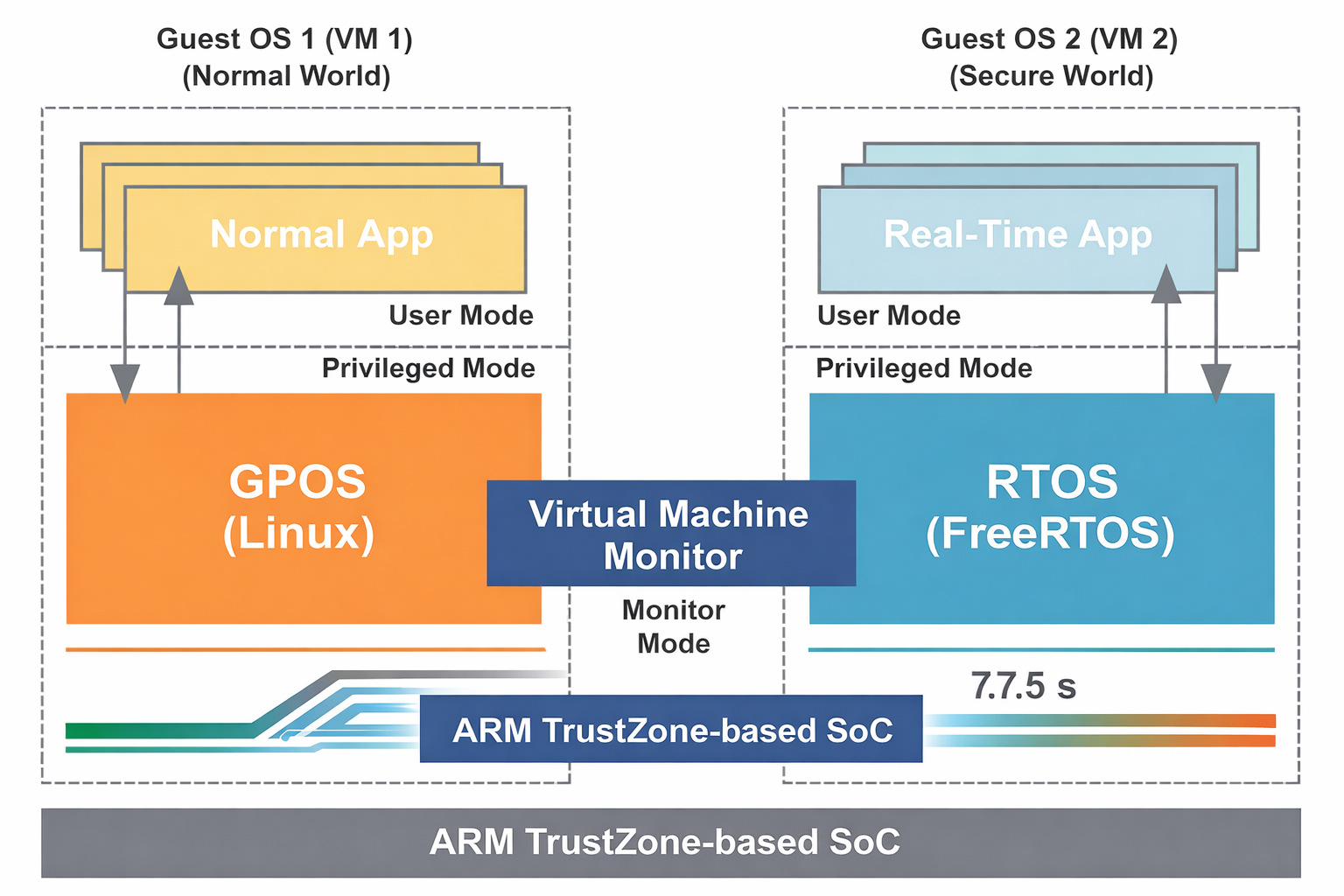

Visualizing Architectural Isolation and Consolidation

The complexity of running heterogeneous workloads on a single SoC is best understood by visualizing the distinct layers and partitions created by the hypervisor. This architecture achieves strong isolation for critical tasks while maximizing hardware utilization.

Embedded Virtualization Architecture

A visual representation of how a Type-1 hypervisor manages and isolates mixed-criticality workloads, separating a Real-Time Operating System (RTOS) partition from a General-Purpose Operating System (GPOS), often running on dedicated cores to ensure maximum determinism and performance.

Selecting the Right Hypervisor Model

Choosing the right hypervisor model sets the foundation for performance:

- Full Virtualization: Guests remain unmodified but may experience higher overhead

- Paravirtualization: Guests use hypercalls for memory and I/O operations, reducing latency

- Hybrid Approaches: Combine hardware virtualization extensions with paravirtualized drivers for balanced performance

This choice is especially critical when designing embedded system architectures that must meet strict timing constraints.

Core Allocation & CPU Affinity: Small Tweaks, Big Impact

Multi-core SoCs give engineers the flexibility to dedicate cores to specific tasks or guests. This is one of the most effective ways to gain predictability and avoid performance jitter.

- Static Partitioning: Hard real-time tasks get exclusive cores.

- CPU Affinity: Pin interrupts and I/O processing to the same cores hosting the guest.

- Avoid Migration: Reduces cache thrashing and ensures more deterministic behavior.

For workloads like motor control, automotive ADAS, or robotics, this dedicated core strategy is essential.

Memory Management and Isolation Techniques

Virtualization relies heavily on robust memory management. Performance improves drastically when memory layouts are well planned:

1. Use MMUs and Hardware Isolation

Create aligned and contiguous memory regions for each guest.

2. Reduce TLB Pressure

Use large pages for frequently accessed buffers.

3. Enable Zero-Copy Mechanisms

For shared data like camera frames or sensor streams, enforce strict ownership rules to minimize copying while maintaining safety.

These practices together support a more efficient advanced design solution for multi-OS systems.

I/O Virtualization and Passthrough

I/O is often the bottleneck in virtualized embedded systems. Optimizations include:

- Device Passthrough: Ideal for real-time tasks needing near-native performance.

- Virtualized Drivers or Service VMs: For shared devices between guests.

- Hardware Accelerators: Offload networking, cryptography, or multimedia to reduce CPU load.

Modern SoCs increasingly include virtualization-aware IP blocks, making I/O virtualization more efficient.

Hypervisor Scheduling and Latency Optimization

Schedulers have a direct influence on latency and jitter. A few best practices include:

- Use fixed-priority or time-partitioned scheduling for deterministic workloads.

- Keep context switching to a minimum.

- Offload non-critical tasks to best-effort partitions.

- Reduce interrupt contention by assigning them to specific cores.

These tuning steps ensure that even in a virtualized environment, real-time tasks remain predictable.

Observability, Debugging, and Performance Tuning

Performance tuning requires detailed observability:

- Fine-Grained Tracing: Track context switches, interrupt latency, DMA events, and cache behavior

- Validate Under Stress: Realistic workloads reveal cross-partition interference and thermal impacts

- Post-Silicon Validation: Ensures virtualization interacts correctly with actual hardware

Working with an experienced embedded system company helps optimize and validate these aspects efficiently.

Containers vs. Full Virtual Machines in Embedded Systems

Containers are lightweight but share the host kernel, reducing isolation. Hypervisor-based partitioning remains the gold standard for safety-critical systems.

A hybrid approach is common:

- RTOS partition for real-time control

- Linux guest for applications

- Containers inside Linux for modular workloads

This balances safety, performance, and development flexibility.

Common Design Patterns That Work Well

Here are the patterns that consistently yield strong performance on multi-core SoCs:

- Static partitioning for critical workloads

- Para-virtualized drivers for shared devices

- Zero-copy data paths for sensor-heavy systems

- Leveraging hardware accelerators for networking and AI workloads

- Thoughtful memory and peripheral assignment

When implemented properly, these approaches form a robust, advanced design solution for multi-core embedded platforms.

A Quick Pre-Virtualization Checklist

Before virtualizing your SoC architecture, consider:

- Categorize real-time and non-real-time workloads

- Select a hypervisor supporting your SoC’s virtualization extensions

- Plan core usage and CPU affinity early

- Map out memory and device ownership

- Validate performance under real workloads

- Ensure long-term maintainability and upgrade paths

A well-structured embedded system company workflow will follow these steps to minimize risk.

Embedded Systems for Mission-Critical Applications: Safety and Reliability

Tessolve: Your Partner for Virtualized Multi-Core SoC Development

At Tessolve, we work closely with semiconductor leaders, OEMs, and product innovators to help them harness the full potential of virtualization on multi-core SoCs. Our engineering capabilities span embedded system design, hypervisor integration, device driver development, SoC bring-up, and complete post-silicon validation. With world-class labs, proven test methodologies, and extensive experience across automotive, industrial, IoT, and wireless domains, we ensure your virtualized system is not only high-performing but production-ready. Whether you’re consolidating workloads, improving determinism, or building next-generation embedded architectures, Tessolve delivers end-to-end support to bring your innovation to life with reliability and speed.

Frequently Asked Questions

What is the main purpose of embedded virtualization in multi-core SoCs?

Embedded virtualization enables secure workload separation, resource sharing, and improved performance on multi-core SoCs through hypervisors and hardware-assisted execution.

How does virtualization improve system performance in embedded devices?

It boosts performance by isolating tasks, reducing interference, enabling parallel processing, and allowing efficient allocation of CPU, memory, and I/O resources.

Is virtualization suitable for real-time embedded applications?

Yes, modern lightweight hypervisors support deterministic scheduling and low-latency pathways, making virtualization effective for many real-time embedded workloads.

What hardware features enable better virtualization on Arm Cortex-A SoCs?

Features like stage-2 translation, secure world isolation, nested tables, and virtualization extensions allow efficient, low-overhead hypervisor execution.