Autonomy at the edge isn’t just one technology; it’s a choreography of sensors, control algorithms, hardware-software integration, and continuous learning. And as devices become smarter, smaller, and more connected, the way they make decisions in real time becomes just as important as the hardware powering them. The shift isn’t about adding AI for the sake of innovation; it’s about creating systems that learn, adapt, and respond intelligently without relying on the cloud.

In this blog, we’ll explore how AI is enhancing control loops in autonomous embedded devices. Also, investigate what evolves, what stays essential, and how engineers can build systems that are robust, efficient, and safe, without getting lost in buzzwords.

Why Control Loops Matter in On-Device Intelligence

A control loop is the heartbeat of any autonomous system: Sense, decide, act, and re-evaluate.

Traditional loops rely mostly on deterministic control strategies like PID or model predictive control. These methods excel in predictable environments but struggle when uncertainty, environmental variability, and dynamic contexts are introduced.

AI doesn’t replace classical control; it enhances it. Neural networks and learning algorithms help systems:

- Adapt to changing conditions in real time

- Handle noisy or incomplete sensor data

- Generalize across use cases, not explicitly programmed

In modern systems, machine learning augments perception and decision-making while classical control ensures stability and safety.

Core Components of an AI-Enabled Control Loop

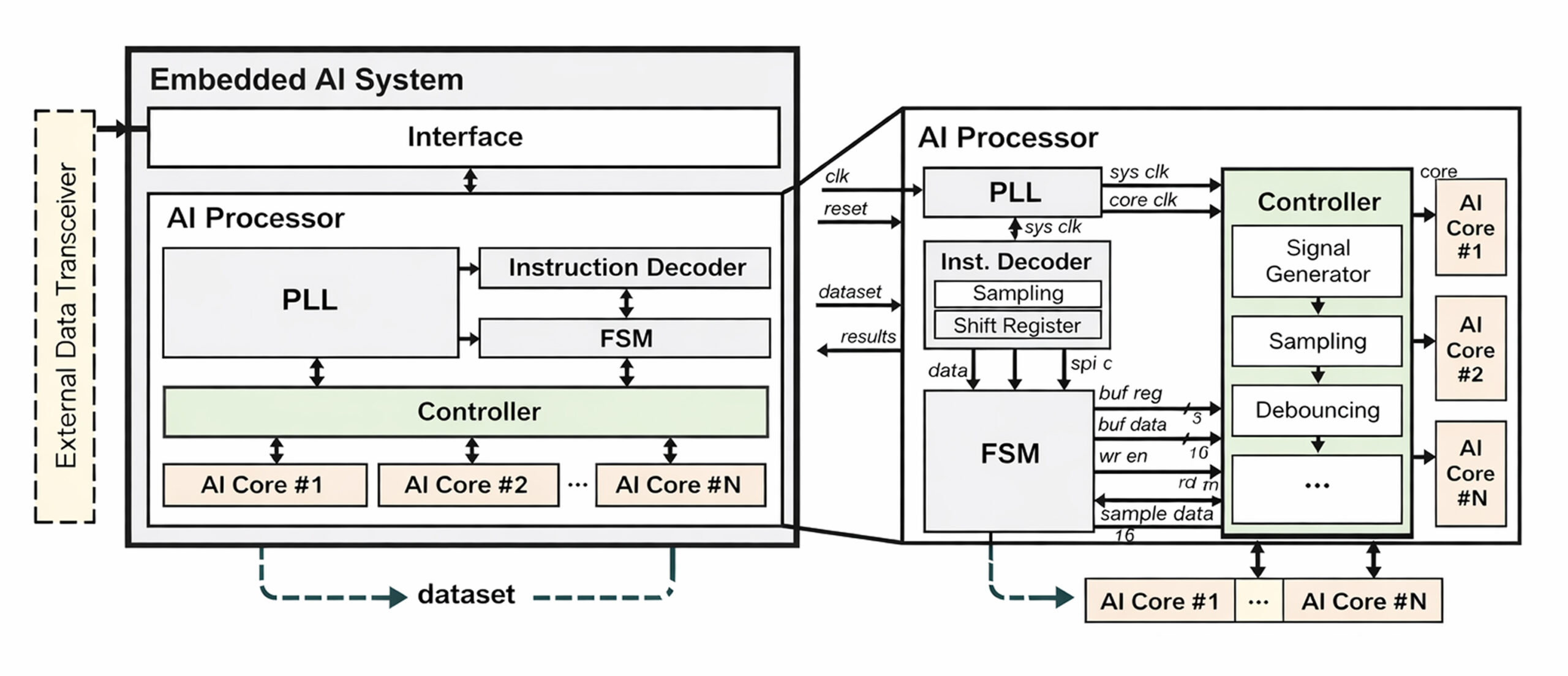

AI-driven control systems typically integrate several layered subsystems that work together to sense the environment, process data, and make autonomous decisions. A high-level view often looks like this:

Block diagram of embedded AI system and AI processor

This diagram illustrates how processing units, AI cores, interface controllers, and signal conditioning work together in an embedded learning system.

Breakdown of Key Components

1. Perception Layer

This converts raw sensor streams (camera, IMU, LIDAR, etc.) into meaningful structured data. Lightweight neural networks or compressed models help maintain low latency.

2. State Estimation / Prediction

ML models and probabilistic estimators fuse sensor outputs to derive real-time system state and future behavior.

3. Planning & Decision-Making

Control logic or reinforcement learning-based systems decide movement, responses, or task sequences.

4. Adaptive Controller

Classical control ensures stability, while AI compensates for dynamic, unmodeled behaviors.

5. Safety & Fallback Layer

This ensures the system never becomes unpredictable, even when AI misinterprets input.

Effective Design Patterns

1. Hybrid Architecture

Maintain a verifiable, deterministic control base, surround it with ML enhancements rather than replacing it altogether.

2. Model Compression & Optimization

Techniques like pruning, quantization, and distillation allow complex models to run efficiently on resource-constrained platforms.

3. Structured On-Device Learning

Minimal incremental learning can be allowed, but only in sandboxed environments to avoid model drift.

4. Digital Twin Validation

Before deployment, simulate updates or new models in virtual replicas of the live environment to prevent costly field failures.

Engineering Constraints to Navigate

Building AI-enabled embedded systems often requires solving constraints like:

- Power Efficiency: AI inference should operate within tight power budgets

- Latency Requirements: Real-time decisions must remain predictable

- Model Explainability: Debuggability and traceability are essential for troubleshooting

- Certification for Safety-Critical Domains: AI behavior must be governed by strict runtime assurances

All of this reinforces the need for disciplined development workflows, especially when scaling from prototype to production.

Prototype-to-Product Workflow

A mature workflow typically includes:

- Simulation-based prototyping

- Hardware-in-the-loop (HIL) validation

- Model compression and firmware integration

- Telemetry-driven field deployment

- Controlled OTA updates with rollback support

It’s a lifecycle that requires both agility and precision.

Real-World Use Cases

Autonomous Industrial Robotics

- Better perception for object recognition

- Learning-based compensation for payload changes

- Safe fallback control behaviors in shared spaces

Next-Gen Autonomous Drones

- Wind disturbance prediction

- On-device path planning

- Navigation without cloud dependency

Why Productization Requires More Than AI Models

Moving from prototype to production demands strong engineering discipline, including end-to-end testing, silicon bring-up, and validation cycles.

At this stage, many teams rely on an external embedded system company for complete lifecycle support, from concept to production testing and compliance.

This is where specialized engineering partners offering hardware, firmware, and system-level expertise play a critical role, especially when bridging machine learning with classical embedded system design principles.

When Advanced Engineering Meets Scalable Deployment

To scale products reliably, organizations often require an advanced design solution partner, not just model developers, but teams experienced in test engineering, edge optimization, and real-world deployment engineering.

Only then can AI-enabled embedded control loops evolve from experimental research to dependable, field-grade technology.

Embedded Systems in Autonomous Vehicles: Architecture, Safety & Validation

Tessolve: Engineering AI-Ready Embedded Systems

At Tessolve, our mission is to help teams accelerate the development and deployment of next-generation autonomous products. As a global embedded system company, we support innovation from concept to commercialization through silicon design, hardware engineering, and post-silicon validation.

We provide comprehensive embedded system design services, including architecture, firmware development, board bring-up, and system integration, to turn prototypes into reliable market-ready solutions. Our global labs and engineering centers enable deep validation for RF, mixed-signal, sensing, and edge AI platforms.

For organizations focused on designing embedded system architectures or scaling AI-enabled devices, Tessolve offers an advanced design solution approach rooted in test-driven development, real-world robustness, and industry-ready reliability. With a strong semiconductor foundation and multidisciplinary engineering teams, we ensure your path from idea to production is faster, safer, and future-proof.

Frequently Asked Questions (FAQs)

1. How is AI different from traditional control algorithms in embedded systems?

AI enables adaptive decision-making and handles unpredictable conditions, while traditional control relies on fixed logic and predefined mathematical models.

2. Do AI-enabled embedded systems always require cloud connectivity?

No, many models run locally using edge inference, ensuring low latency and reliable operation even without internet access.

3. What hardware is needed for AI-based control loops?

It depends on complexity, but typically microcontrollers, edge processors, accelerators, or low-power Neural Processing Units.

4. Can AI-enabled control loops be used in safety-critical applications?

Yes, but they require a hybrid design with classical control, safety monitoring, testing, and regulatory compliance to ensure predictable behavior.