As edge computing continues to gain traction, the need for real-time data processing and low-latency analytics is transforming system design across industries. From autonomous vehicles and smart infrastructure to healthcare and industrial automation, modern applications demand intelligent decision-making at the edge—right where the data is generated.

To meet these performance and efficiency demands, engineers are increasingly integrating FPGA (Field-Programmable Gate Array) co-processors into edge systems. Known for their parallel processing capabilities, reconfigurability, and low power consumption, FPGAs have emerged as a powerful complement to traditional CPUs and GPUs.

This blog explores how FPGA co-processors enable real-time analytics at the edge and outlines best practices for effectively integrating them into embedded system designs.

Why FPGAs for Edge Intelligence?

While traditional processors excel at everyday computing tasks, they struggle to handle many edge workloads, such as video analytics, object detection, and continuous latency processing, which are specifically designed for dynamic workloads due to their high compute intensity and latency.

That is where FPGAs excel! FPGAs can be programmed to execute specific logic functions in parallel with an amazing boost in throughput (over CPUs and GPUs) and latency, and they are also reconfigurable, meaning designers can execute updates and optimizations without changing the physical hardware itself in a rapidly changing edge environment.

Key benefits include:

- Deterministic Performance: Critical for applications requiring millisecond-level responsiveness.

- Parallelism: Ideal for tasks like signal processing, image recognition, and encryption.

- Energy Efficiency: Lower power consumption compared to GPUs performing similar tasks.

- Customizability: Tailored logic pathways reduce the overhead seen in software layers.

When paired with the right advanced design solution, FPGA co-processors become the cornerstone of an agile, high-performance edge system.

Best Practices for Integrating FPGA Co-Processors at the Edge

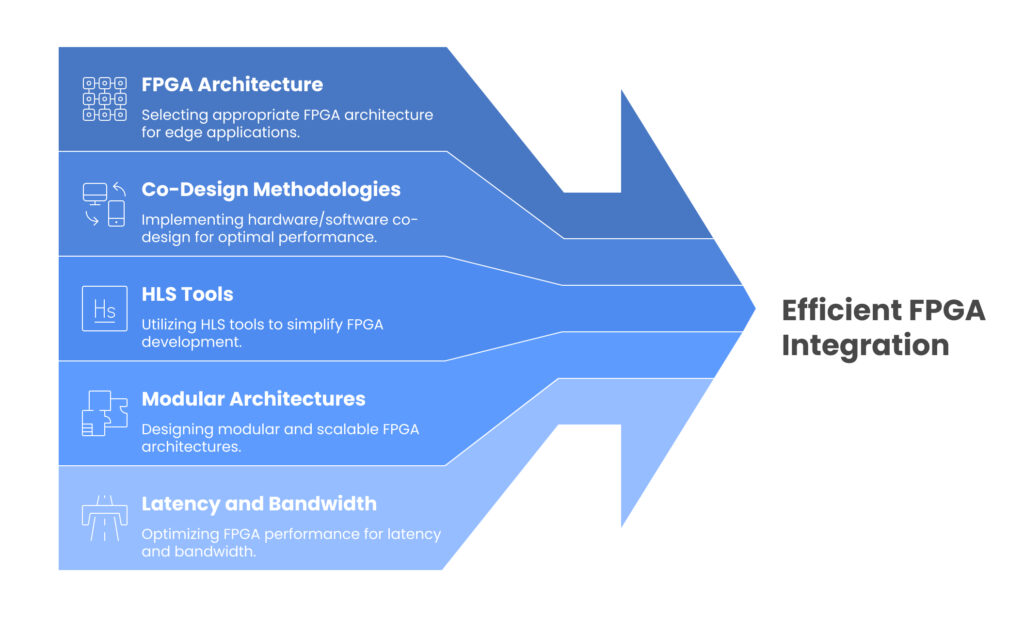

1. Choose the Right FPGA Architecture

Not all FPGAs are created equal. While high-end FPGAs offer immense power, they also consume more energy and incur higher costs. For edge applications, consider mid-range or low-power variants that strike a balance between performance and efficiency.

Platforms like Xilinx Zynq UltraScale+ and Intel Agilex F-series are purpose-built for edge scenarios, offering embedded ARM cores that work seamlessly with FPGA logic.

2. Adopt Hardware/Software Co-Design Methodologies

Effective edge systems require tight coupling between hardware and software. A co-design approach ensures that compute-intensive tasks are offloaded to the FPGA, while control logic and communication layers run on a CPU or microcontroller.

This collaborative design structure is now a standard practice in embedded system design, ensuring that each part of the system is optimized for its intended role.

3. Leverage High-Level Synthesis (HLS) Tools

One of the traditional barriers to FPGA adoption was the need for specialized hardware design skills. However, now HLS tools enable developers to design FPGA logic using high-level languages like C/C++, allowing software engineers to contribute directly.

This improves design turnaround time and enables better integration with the rest of the advanced design solution stack, especially when combined with modern EDA (Electronic Design Automation) tools.

4. Emphasize Modular and Scalable Architectures

Design your system with modularity in mind. Each function, whether image filtering, signal transformation, or AI inference, should be developed as a reusable block. This allows for easier scaling and updates, and fits neatly within a robust embedded system company ecosystem where different teams or vendors might handle different modules.

Using IP (Intellectual Property) cores or open-source hardware accelerators also speeds up time-to-market.

5. Optimize for Latency and Bandwidth

Even the fastest FPGA won’t help if data bottlenecks occur elsewhere in the pipeline. To ensure end-to-end efficiency:

- Use DMA (Direct Memory Access) for rapid data movement.

- Minimize off-chip memory access by using on-chip block RAM.

- Analyze bottlenecks using simulation and emulation tools before deployment.

These strategies ensure you unlock the full potential of your embedded system design.

Real-Time Applications Where FPGA Co-Processors Excel

FPGA-based edge systems are being deployed in a variety of sectors:

- Industrial IoT (IIoT): Real-time machine diagnostics, robotic control, and predictive maintenance.

- Healthcare: Portable diagnostic tools for ECG and ultrasound analysis.

- Autonomous Vehicles: Sensor fusion, object detection, and real-time decision-making.

- Smart Surveillance: High-speed video processing, facial recognition, and anomaly detection.

- Telecommunications: 5G edge nodes using FPGAs for network packet acceleration and load balancing.

In each of these areas, the ability to process data instantly and adaptively has become mission-critical, making FPGAs the technology of choice.

Partnering with the Right Expert: Tessolve

Creating intelligent edge systems with FPGAs calls for much more than hardware skills. It requires a fundamental understanding of system-level integration, firmware development, and AI acceleration approaches. Tessolve can help.

As a leading embedded system company, Tessolve offers complete engineering services, including board design and validation, FPGA programming, and system-level testing capabilities. Many world-class companies lean on Tessolve’s proven advanced design solution offerings in the automotive, industrial, and semiconductor sectors.

Tessolve has a clear focus on innovation. This helps our customers develop future-proof, scalable, and optimized FPGA-based edge solutions. Whether you’re developing a new device or optimizing an existing solution, Tessolve’s engineering team backs everything with domain knowledge and proven approaches.

Also Read: Benefits and Challenges of Using Field-Programmable Gate Arrays (FPGAs)

Conclusion: The New Era of Embedded Speed and Agility

Edge computing is redefining the way data is processed, making speed, agility, and responsiveness non-negotiable. FPGA co-processors are at the heart of this transformation—enabling parallel execution, real-time processing, and flexible system architectures that can adapt to evolving workloads.

But achieving success with FPGAs goes beyond choosing the right hardware. It requires a system-level approach that blends hardware/software co-design, high-level synthesis, and scalable architecture strategies. By implementing these best practices and partnering with an expert embedded system company like Tessolve, organizations can accelerate development and maximize the value of their edge deployments.

In this new era of embedded intelligence, FPGA co-processors aren’t just an option—they’re a competitive advantage.